Key considerations for contact tracing applications

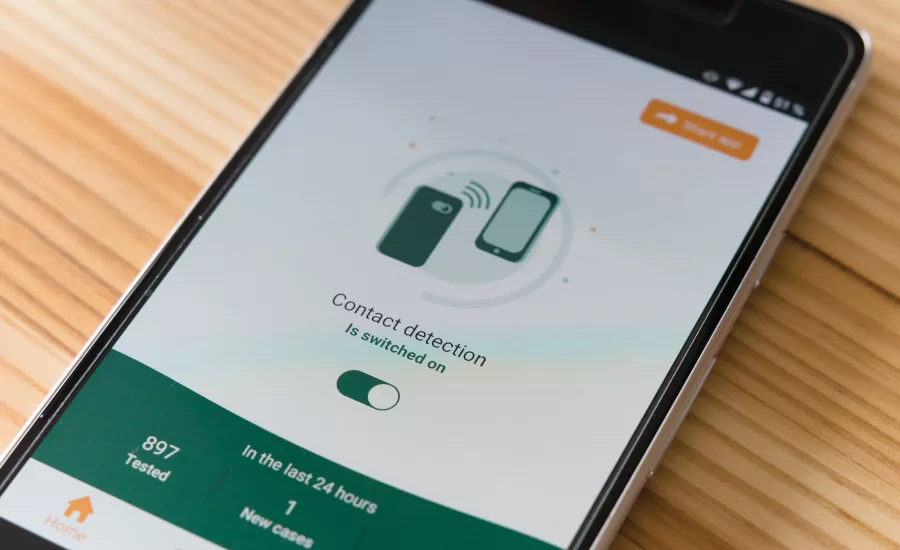

In the era of COVID-19, governments, a number of major tech companies, and small developers are creating contact tracing applications and tools in an attempt to continue containing the outbreak and re-open safely. Aiming to assist manual trackers, the majority of applications feature Bluetooth tracking, including Apple and Google’s joint effort, which identifies when two users are in close contact, but doesn’t record locations and is decentralized to control the data users upload. Apple and Google’s API has been deployed by a variety of governments, including in the UK, multiple states across the U.S., and Italy.

To many citizens, this all may feel intrusive, but contact tracing isn’t new -- it’s an age-old tool, usually done entirely manually, for public health officials controlling emerging diseases. Today’s advancements in technology could help augment manual contract tracing efforts. Yet, in its early stages, it's difficult to measure effectiveness of these applications, and major roadblocks have arisen that may deter users or make the technology more of a headache than its worth. Further, as applications are implemented across the world, a familiar debate arises over privacy and potential avenues for abuse.

With any developing technology expanding its prevalence in our day to day lives, many of these issues will always be an ongoing concern for governments and users. But it's crucial to analyze these technologies and efforts to constantly see if applications are having the intended impact and supporting manual tracking efforts, or if it's simply creating more issues.

While the jury’s out on whether these applications will be an effective tool for contact tracers, or if the majority of citizens will fully embrace these applications, it’s clear that contact tracing will likely become a part of our daily lives. To keep these technologies on the right track, developers, policymakers and stakeholders must ask questions to measure effectiveness, while addressing key issues to prevent abuse and secure consumer data.

Abuse and Misinformation

From major breaches to individuals manipulating the system, having apps that keep track of our most personal data piques the interests of bad actors. This could look like trolling, where an individual reports fake symptoms or contact, sparking panic that a mass amount of people could have been infected. Or it could take the form of replay attacks, where bad actors connect to and intercept data on a secure network, like the number of positive cases, then either resend or misdirect the data, creating false positives. Trackers assigned to manage and utilize the data obtained from these apps may not understand how to identify an attack -- whether sophisticated or not -- so it is imperative that all channels flowing into a contact tracing application database are secure, and relevant parties are trained to spot potential threats.

Access to Private Information

Depending on which data is required, most contact tracing apps, and the individuals assigned to monitor cases, have access to private location information -- more of our data than most people have ever willingly provided to health authorities before. Further, some apps include symptom tracking features, meaning that if a breach happens, health data is also potentially vulnerable. And, China was even using their own applications to see individuals that break quarantine rules, unlocking concerns of misuse outside of COVID in the future.

Another layer of data frequently needed in applications is location tracking to determine recent points of contact. In lieu of direct location tracking, many applications are opting for Bluetooth pings, but there are concerns that it may not be foolproof, since signals may be unreliable, like in underground public transport, or inaccurately measure distance between users. Additionally, many applications aren’t currently utilizing certificate pinning, which is an additional layer of secure communication between the user and the application itself, or the public health authority, encrypting their data. Without certificate pinning, applications run the risk of mobile device developers or other corporations being able to access intimate health data and see statuses. Although this isn’t necessarily an issue for most, it may deter many users who are uncomfortable with this level of potential access, impacting adoption.

Performance

Every device we own depends on the company behind it. To keep these devices working and constantly improving, developers frequently provide system updates, which impact calibration. For applications using Bluetooth tracking, most set thresholds for distance between pings to determine whether or not contact was actually made. When calibrations are shifted on the backend on the device itself, Bluetooth ranges are then changed and throws off these set thresholds. Unless the technology developer provides those calibration details to the application developer ahead of the update, it will greatly impact the performance of the contact tracing application, without any control from the health authority itself, causing errors and diminishing performance until the radius and thresholds are updated. Overall, public health authorities and application developers need to have constant communication in order to prevent these lapses, and a broader conversation over this control is needed.

As with most technologies, nothing is foolproof -- bad actors will typically find weaknesses and exploit them. While citizens adjust to providing their data, the larger industry should ensure they are taking time to identify privacy concerns, tackle potential abuse vectors, and measure effectiveness within these contact tracing tools, and ultimately determine if these tools are viable for general consumption.

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!